What is Neural network

Neural network, consist of many layers, each with many perceptrons (neurons). Many implementations consist of 60 sloc, and they are hard to read. So lets build our own in 60 sloc without ANY library.

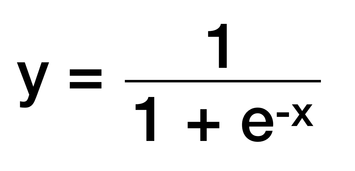

Sigmoid

Sigmoid is an activation function used to map any input into value between 0 and 1 to add non-linearity to our neural network

Talk is cheap, show me the code

1) We start with definition of the sigmoid function and the sigmoid gradient function, which causes, that we adjust our weights just a little bit

2) Lets define our neuron class and the forward function, which gets the weighed sum of neuron inputs and normalize it to value between 0 and 1 using sigmoid. Then use the backward function to edit the weights

3) adjusting weights:

we make adjustment proportional to the size of error, this is achieved by using "sigmoidGradient"

(ensures the we adjust just a little bit)

pass "this.sum" from "forward()" into sigmoidGradient, input (0 = no adjustment or 1) * sigmoidGradient * error

> creates "deltas array"

> we then adjust the weights by subtracting deltas

we make adjustment proportional to the size of error, this is achieved by using "sigmoidGradient"

(ensures the we adjust just a little bit)

pass "this.sum" from "forward()" into sigmoidGradient, input (0 = no adjustment or 1) * sigmoidGradient * error

> creates "deltas array"

> we then adjust the weights by subtracting deltas

4) Layer class:

we create "neurons" array and fill it with neurons

then define forward function and backward function, which gets adjusted weights and adds them up

then define forward function and backward function, which gets adjusted weights and adds them up

5) Network class

our network will consist of 2 layers.

The first element ([0,1]) gets passed into concat method inside reduce function so the result [1, 0, 1] is sent forward

our network will consist of 2 layers.

The first element ([0,1]) gets passed into concat method inside reduce function so the result [1, 0, 1] is sent forward

6) calls forward in neurons class, add bias add bias and pass the result into reduce, and adds bias ([1].concat)

7) forward data, subtract expected result from computed, push the results into errors array, reverse layers, push errors back and update layers (update neurons = edit weights).

With our weights set correctly, send [0, 1] forward and get the correct result [0.99...]

With our weights set correctly, send [0, 1] forward and get the correct result [0.99...]

10) Usage:

All together

Code Editor